USMNT news: Weah joins Juventus, Adams to West Ham, Horvath moving on

Today's USMNT news includes Timothy Weah officially joining Juventus, West Ham targeting Tyler Adams and Nottingham Forest looking to move on Ethan Horvath.USMNT news: Timothy Weah joins JuventusTimothy Weah has officially been announced as a Juventus player. The USMNT winger joins the club...

2023-07-02 21:32

Lawyers for Trump ally Lindell seek to quit election defamation cases

By David Thomas Lawyers for My Pillow CEO Mike Lindell sought permission on Thursday to quit representing him

2023-10-06 05:15

FanDuel Golf Promo Awards $100 For Betting on Rory McIlroy at the British Open

Listed as a heavy favorite at +650 odds, a $5 bet on Rory McIlroy to win The Open Championship would typically profit you just $35.If you sign up with FanDuel Sportsbook and bet $5 or more on McIlroy to win the British Open, you’ll win $100 in bonus bets – even if he doesn&#...

2023-07-18 01:00

Fran Drescher responds to criticism about her Italy trip and pic with Kim Kardashian days before SAG strike

Fran Drescher, the president of the SAG-AFTRA union, responded to criticism on Thursday for traveling to Italy to attend Dolce & Gabbana's Alta Moda festivities this past weekend as her 160,000-member actors' union faced a deadline to go on strike.

2023-07-14 06:34

'He never swore': Donny Osmond remembers his 'tough' father whenever he feels like cursing people

Donny Osmond has a simple rule when it comes to swearing: don’t do it

2023-09-10 03:14

'I'm a dad first': Ryan Gosling reveals he took 4-year-long break from acting to be with wife Eva Mendes and children

Ryan Gosling, 42, claimed that he made the choice when he and his wife, 49-year-old Eva Mendes, welcomed their second child

2023-06-01 19:14

Trump defends Jason Aldean amid music video backlash

Former President Donald Trump spoke out in favor of country singer Jason Aldean amid controversy around one of his new music videos. “Jason Aldean is a fantastic guy who just came out with a great new song. Support Jason all the way. MAGA!!!” the former president wrote on Truth Social on Thursday. Online critics blasted the “Try That In A Small Town” music video after discovering it was filmed outside the Maury County Courthouse in Columbia, Tennessee, where 18-year-old Black teenager Henry Choate was lynched in 1927, as well as where the Columbia race riot was held in 1946. As of Wednesday, Country Music Television said it refused to air the music video, USA Today reported. His music video was released Friday. Critics have accused the song of “promoting violence” and lynchings. Mr Aldean responded to the criticism in a lengthy tweet on Tuesday. He said for him, the song “refers to the feeling of a community that I had growing up, where we took care of our neighbors, regardless of differences of background or belief. Because they were our neighbors, and that was above any differences.” He added, “while I can try and respect others to have their own interpretation of a song with music – this one goes too far.” The country singer is a mass shooting survivor. Shannon Watts, founder of Moms Demand Action, reacted to the song’s lyrics: Mr Aldean “who was on-stage during the mass shooting at a Las Vegas concert in 2017 that killed 60 people and wounded over 400 more - has recorded a song called “Try That In A Small Town” about how he and his friends will shoot you if you try to take their guns.” Fellow 2024 presidential candidate and Florida Gov Ron DeSantis also chimed in with support for the country singer in an interview on “Fox & Friends”: “We need to restore sanity to this country. I mean, what is going on that that would be something that would be censored? I mean, give me a break. We’re off the rocker here.” South Dakota Republican Gov Kristi Noem posted a video on Wednesday with her reaction to the music video’s backlash: “I’m shocked by what I’m seeing with people attempting to cancel the song, cancel Jason.” She added, “Thank you for writing a song that America can get behind.” Read More ‘A modern lynching song’?: Jason Aldean and the most controversial song in country Jason Aldean responds as row continues over ‘Try That in a Small Town’ The Jason Aldean video is just the tip of the country music iceberg

2023-07-21 03:46

Michael O’Neill wants Shea Charles to learn from dismissal on frustrating night

Michael O’Neill has told Shea Charles he must learn from his dismissal after Northern Ireland suffered yet another 1-0 defeat in Euro 2024 qualifying, this time at home to Slovenia. The 19-year-old Charles has been one of the bright spots for Northern Ireland in a hugely frustrating qualifying campaign, among the young players who have grabbed the chance to establish themselves in the side amid an injury nightmare. But his international copybook got its first blemish as he collected two yellow cards to be sent off just before the hour mark at Windsor Park, meaning his run of starting every game so far in this campaign will end when Northern Ireland head to Finland next month. The Southampton midfielder was booked for dissent just a few minutes into the match, protesting against the dubious decision to award Slovenia the free-kick from which Adam Cerin won the game, and then saw red when he caught Andraz Sporar late in the 58th minute. Northern Ireland had been frustrated by several decisions from referee Istvan Kovacs on the night but O’Neill said that was something they had to be able to handle. “This is a learning curve for young players,” he said. “(Slovenia) are a much more experienced international team than we are. You can see that in the way they managed the situation and played the referee a little bit. “The emotion in the stadium obviously transferred to the players a little bit, everyone gets a bit frustrated with some of the decisions…If you’re booked for dissent, that’s poor. You put yourself under pressure so we have to learn from that.” “We’ve probably seen a little combination of inexperience in a number of players and also just the nature of the emotion in the game when you’re chasing the game against a team that are a little bit more experienced and that can spill over a little bit. “But I think that on the night we were pretty disappointed with the performance of the referee.” This was Northern Ireland’s fifth 1-0 defeat of a campaign in which they have faced endless injury problems, with O’Neill forced to use two more fresh faces – Eoin Toal and Brad Lyons – on the night to take the number who have played in the eight qualifiers so far to 31. O’Neill could rightly argue that this performance was a step forward from last month’s 4-2 defeat to Slovenia in Ljubljana considering the way a makeshift defence was able to stifle Benjamin Sesko – who went down easily to win the decisive free-kick off Jamal Lewis – and Sporar. But ultimately it was another defeat, a sixth out of eight with only two wins over minnows San Marino to break up the run. “I think there is always frustration when you lose the game – and a little bit of disappointment as well,” he said. “I think the players deserved more out of it than what they got. We have had a frustrating campaign, a very challenging campaign and tonight’s game was probably a reflection of that once again.” Captain Jonny Evans ended the night limping heavily after taking a late blow to his foot, having already been down in the first half to receive treatment. “He’s obviously hobbling a little bit in there,” O’Neill said of the Manchester United defender. “I think the same foot was stamped on three times so he’s limping pretty badly but I think he’ll be fine. “It will be one of those where when he wakes up in the morning he’ll be pretty sore but there’s no real damage as far as I know.” Read More Steve Clarke says Scotland have ‘lots to improve’ after defeat to France Republic of Ireland heading in the right direction – striker Callum Robinson Scotland come back to earth as France recover from early fright Shea Charles dismissed as Northern Ireland lose at home to Slovenia Jordan Henderson has ‘no regrets’ over Saudi Arabia move despite being booed Rassie Erasmus expects England to have ‘some beef’ with South Africa

2023-10-18 06:16

Tesla sues Swedish agency as striking workers halt delivery of license plates of its new vehicles

Tesla has filed a lawsuit against the Swedish Transport Agency as striking workers in the Scandinavian country halted the delivery of license plates of new vehicles manufactured by the Texas-based automaker

2023-11-27 20:47

Keegan Bradley wins Travelers Championship, breaks tournament record by 1 shot

Keegan Bradley built a big enough lead in front of adoring New England fans that he broke the tournament record at the Travelers Championship despite a shaky closing stretch

2023-06-26 06:58

A surge in rail traffic on North Korea-Russia border suggests arms supply to Russia, think tank says

A U.S. think tank says recent satellite photos show a sharp increase in rail traffic along the North Korea-Russia border, indicating the North is supplying munitions to Russia

2023-10-08 23:56

Josh Wolff hails Austin FC for draw without DP Sebastian Driussi

Despite injuries Austin FC grind out a 2-2 draw against the Portland Timbers.

1970-01-01 08:00

You Might Like...

Clemson football: What is Dabo Swinney’s buyout with Tigers?

SME, Stratasys Announce Winners of 2023 SkillsUSA Additive Manufacturing Competition

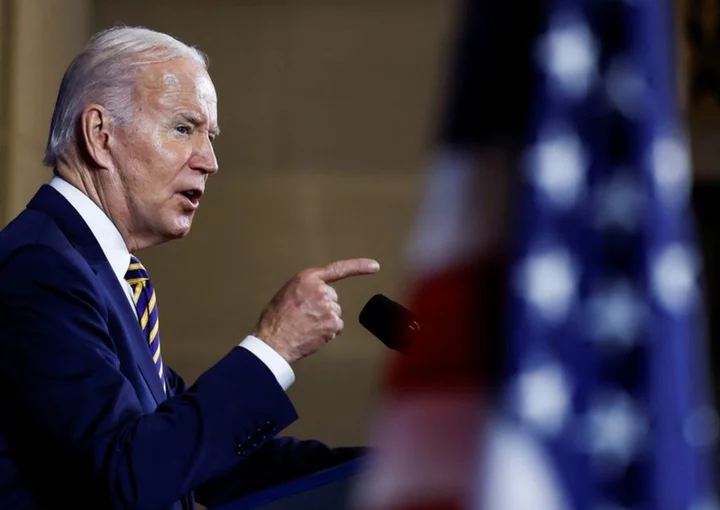

Tribes split over Biden plan to ban drilling near New Mexico cultural site

Afghan women face 'pandemic of suicidal thoughts'

8 Facts About Labor Day

Browns' No. 1 defense faces toughest test of early season in Ravens' dual-threat QB Lamar Jackson

Welch's Strengthens Leadership Team with Appointments of New Chief Financial Officer and Chief People Officer

Full Valorant Patch 5.01 Notes Details Phoenix Buffs