Pakistan shut down the internet - but that didn't stop the protests

Millions were plunged offline after Imran Khan's arrest but the blackout hasn't stopped protests.

1970-01-01 08:00

AI pioneer warns Government offering little defence against threat of technology

One of the pioneers of artificial intelligence has warned the Government is not safeguarding against the dangers posed by future super-intelligent machines. Professor Stuart Russell told The Times ministers were favouring a light touch on the burgeoning AI industry, despite warnings from civil servants it could create an existential threat. A former adviser to both Downing Street and the White House, Professor Russell is a co-author of the most widely used AI text book and lectures on computer science at the University of California, Berkeley. He told The Times a system similar to ChatGPT – which has passed exams and can compose prose – could form part of a super-intelligence machine which could not be controlled. “How do you maintain power over entities more powerful than you – forever?” he asked. “If you don’t have an answer, then stop doing the research. It’s as simple as that. “The stakes couldn’t be higher: if we don’t control our own civilisation, we have no say in whether we continue to exist.” In March, he co-signed an open letter with Elon Musk and Apple co-founder Steve Wozniak warning of the “out-of-control race” going on at AI labs. The letter warned the labs were developing “ever more powerful digital minds that no one, not even their creators, can understand, predict or reliably control”. Professor Russell has worked for the UN on a system to monitor the nuclear test-ban treaty and was asked to work with the Government earlier this year. “The Foreign Office… talked to a lot of people and they concluded that loss of control was a plausible and extremely high-significance outcome,” he said. “And then the Government came out with a regulatory approach that says: ‘Nothing to see here… we’ll welcome the AI industry as if we were talking about making cars or something like that’.” He said making changes to the technical foundations of AI to add necessary safeguards would take “time that we may not have”. “I think we got something wrong right at the beginning, where we were so enthralled by the notion of understanding and creating intelligence, we didn’t think about what that intelligence was going to be for,” he said. We've sort of got the message and we're scrambling around trying to figure out what to do Professor Stuart Russell “Unless its only purpose is to be a benefit to humans, you are actually creating a competitor – and that would be obviously a stupid thing to do. “We don’t want systems that imitate human behaviour… you’re basically training it to have human-like goals and to pursue those goals. “You can only imagine how disastrous it would be to have really capable systems that were pursuing those kinds of goals.” He said there were signs of politicians becoming aware of the risks. “We’ve sort of got the message and we’re scrambling around trying to figure out what to do,” he said. “That’s what it feels like right now.” The Government has launched the AI Foundation Model Taskforce which it says will “lay the foundations for the safe use of foundation models across the economy and ensure the UK is at the forefront of this pivotal AI technology”. Read More Charity boss speaks out over ‘traumatic’ encounter with royal aide Ukraine war’s heaviest fight rages in east - follow live TikTok ‘does not want to compete with BBC for Eurovision final viewers’ Eurovision’s preparations for potential Russia cyberthreat ‘in good place’ UK-based tech company claims quantum computing ‘breakthrough’

1970-01-01 08:00

Scientists discover huge caves made by giant sloths

A number of huge tunnels that were discovered in South America at the turn of the century may have been made by giant sloths. At the turn of the century, professor of geology, Heinrich Frank, spotted a strange hole on a highway in Brazil, and crawled inside. There, he realised the tunnel was 4.5 meters (15 feet) long. He also found a collection of giant claw marks on the ceiling. “There’s no geological process in the world that produces long tunnels with a circular or elliptical cross-section, which branch and rise and fall, with claw marks on the walls,” Frank told Discover, adding he's "seen dozens of caves that have inorganic origins, and in these cases, it’s very clear that digging animals had no role in their creation.” Sign up to our free Indy100 weekly newsletter The tunnel, along with many others that he and others discovered in Brazil and Argentina, are thought to be made by extinct giant sloths 8-10,000 years ago that were around the size of an African elephant. In the Rio Grande do Sul area, Frank and his team found over 1,500 tunnels made by these sloths beasts, with the longest stretching for 609 meters (2,000 feet) and standing at 1.8 meters (6 feet) tall. Goodness. Have your say in our news democracy. Click the upvote icon at the top of the page to help raise this article through the indy100 rankings.

1970-01-01 08:00

AI pioneer warns UK is failing to protect against ‘existential threat’ of machines

One of the pioneers of artificial intelligence has warned the government is not safeguarding against the dangers posed by future super-intelligent machines. Professor Stuart Russell told The Times ministers were favouring a light touch on the burgeoning AI industry, despite warnings from civil servants it could create an existential threat. A former adviser to both Downing Street and the White House, Prof Russell is a co-author of the most widely used AI textbook and lectures on computer science at the University of California, Berkeley. He told The Times a system similar to ChatGPT – which has passed exams and can compose prose – could form part of a super-intelligence machine which could not be controlled. “How do you maintain power over entities more powerful than you – forever?” he asked. “If you don’t have an answer, then stop doing the research. It’s as simple as that. “The stakes couldn’t be higher: if we don’t control our own civilisation, we have no say in whether we continue to exist.” In March, he co-signed an open letter with Elon Musk and Apple co-founder Steve Wozniak warning of the “out-of-control race” going on at AI labs. The letter warned the labs were developing “ever more powerful digital minds that no one, not even their creators, can understand, predict or reliably control”. Prof Russell has worked for the UN on a system to monitor the nuclear test-ban treaty and was asked to work with the Government earlier this year. “The Foreign Office … talked to a lot of people and they concluded that loss of control was a plausible and extremely high-significance outcome,” he said. “And then the government came out with a regulatory approach that says: ‘Nothing to see here… we’ll welcome the AI industry as if we were talking about making cars or something like that’.” He said making changes to the technical foundations of AI to add necessary safeguards would take “time that we may not have”. “I think we got something wrong right at the beginning, where we were so enthralled by the notion of understanding and creating intelligence, we didn’t think about what that intelligence was going to be for,” he said. We've sort of got the message and we're scrambling around trying to figure out what to do Professor Stuart Russell “Unless its only purpose is to be a benefit to humans, you are actually creating a competitor – and that would be obviously a stupid thing to do. “We don’t want systems that imitate human behaviour… you’re basically training it to have human-like goals and to pursue those goals. “You can only imagine how disastrous it would be to have really capable systems that were pursuing those kinds of goals.” He said there were signs of politicians becoming aware of the risks. “We’ve sort of got the message and we’re scrambling around trying to figure out what to do,” he said. “That’s what it feels like right now.” The government has launched the AI Foundation Model Taskforce which it says will “lay the foundations for the safe use of foundation models across the economy and ensure the UK is at the forefront of this pivotal AI technology”. Read More ChatGPT creators try to use artificial intelligence to explain itself – and come across major problems Artificial intelligence could ‘transform’ heart attack diagnosis, scientists say Hackers aim to find flaws in AI - with White House help ChatGPT user in China detained for creating and spreading fake news, police say Charity boss speaks out over ‘traumatic’ encounter with royal aide Ukraine war’s heaviest fight rages in east - follow live

1970-01-01 08:00

Biden previews 2024 election pitch to young Black voters in Howard University commencement speech

President Joe Biden previewed his 2024 election pitch to young Black voters Saturday in commencement remarks at a Howard University graduation ceremony in Washington, DC, articulating his vision of a "future for all Americans,"

1970-01-01 08:00

Former ByteDance Exec Claims TikTok Stole Content From Competitors

TikTok owner ByteDance stole content from Snapchat and Instagram to boost TikTok engagement, according to

1970-01-01 08:00

Humans could be controlled by robots, AI firm’s founder warns

Robots could end up controlling humanity, the founder of an artificial intelligence firm will warn. Emad Mostaque, 40, who founded Stability AI three years ago, will say this could happen in a “worst case scenario” and humans could be told “goodbye, you’re kind of boring”. However, governments could soon be shocked into regulating the machines by an event that suddenly makes their impact real, he will add. In an interview with the BBC’s Laura Kuenssberg On Sunday programme, he will say: “If you have a more capable thing than you, what is democracy in that kind of environment? “This is a known unknown because we can’t conceive of something more capable than us but we all know people more capable than us. If you build open models and you do it in the open, you should be criticised if you do things wrong and hopefully lauded if you do some things right Emad Mostaque “My personal belief is that it will be like that movie Her with Scarlett Johansson and Joaquin Phoenix, humans are a bit boring and it will be like ‘goodbye, you’re kind of boring’, but I could be wrong. “It deserves to be discussed in a public sphere, if we have agents more capable than us that we cannot control, that are going across the internet and hooked up and they achieve a level of automation, what does that mean? “The worst case scenario is that it proliferates and basically it controls humanity because you could have a million things replicating effectively, but we don’t know.” He believes the moment that actor Tom Hanks caught coronavirus in March 2020 was the moment millions understood the risk of the novel disease. When a similar moment arrives with artificial intelligence governments will conclude “we need policy now”, he will claim. The impact of the new machines could be “painful” to begin with and their effect on the economy could be greater than that caused by the pandemic, he believes. However, he thinks the jobs which disappear will be replaced by better ones because machines will do menial tasks, allowing us to concentrate on the things which make us human. The new technology could also bring “huge” benefits, he claims. Companies such as ChatGPT and DeepMind will be bigger than Google and Facebook in 10 years time, he adds. Stability AI has already been valued at 1 billion dollars (£803 million) and could soon be worth 4 billion dollars (£3.2 billion) as more money, including from Hollywood star Ashton Kutcher, floods into it. The company created Stable Diffusion, a tool which uses AI to make images from simple text instructions by analysing pictures found online. Mr Mostaque, a mathematician, is determined to keep his technology open source – allowing anyone to look at the code, share it and use it. He believes this should give the public the confidence that the technology will not become too dangerous. He will say: “I think there shouldn’t have to be a need for trust. “If you build open models and you do it in the open, you should be criticised if you do things wrong and hopefully lauded if you do some things right.” However, Getty Images is currently engaged in legal action against his company, with the photo agency claiming the rights to the images it sells have been infringed. Read More Charity boss speaks out over ‘traumatic’ encounter with royal aide Ukraine war’s heaviest fight rages in east - follow live AI pioneer warns UK is failing to protect against ‘existential threat’ of machines TikTok ‘does not want to compete with BBC for Eurovision final viewers’ Eurovision’s preparations for potential Russia cyberthreat ‘in good place’

1970-01-01 08:00

Kate Isaacs: #NotYourPorn founder discovers deepfake porn of herself online, says every woman is 'potential victim'

Kate Isaacs, 30, found a deepfake video of her face in a sexual act video

1970-01-01 08:00

Nepali sherpa becomes world’s second person to scale Everest 26 times

By Gopal Sharma KATHMANDU A Nepali sherpa guide climbed Mount Everest for the 26th time on Sunday, hiking

1970-01-01 08:00

Mushrooms appear to have 'conversations' with each other after it rains

What do you reckon the chattiest vegetable is? The answer may be mushrooms, as according to a new study from scientists in Japan, rain may prompt some fungi to communicate using underground electrical signals. In a study published in Fungal Ecology , researchers monitored small, tan mushrooms known as bicoloured deceivers in the mixed forest at the Kawatabi Field Science Center of Tohoku University in Japandorm. They looked at the 'shrooms electrical potential, measured in megavolts (mV), for about two days in late September and early October 2021. The study site was initially sunny and dry, and the second was during rain - at which point the mushrooms showed some electrical potential and signal transport between each other. Microbial ecologist Yu Fukasawa of Tohoku University said: "Our results confirm the need for further studies on fungal electrical potentials under a true ecological context." Sign up to our free Indy100 weekly newsletter Previously, scientists had found that these mushrooms make subterranean "sheaths" around the exterior of a tree's roots. These sheaths are made of hyphae and when they link underground they form interconnected systems known as mycorrhizal networks that allow forests communicate via chemical signals down tree roots and mycorrhizal fungi. And a 2022 study found patterns of nerve-like electrical activity in some fungi that seem comparable to the structure of human speech. The study identified up to 50 different "words," or groups of spikes in electrical activity, generated by fungal networks. Earlier research has also found that plants can send secret electrical signals underground, possibly even without help from mycorrhizal fungi. Who knew mushrooms had so much to say? Have your say in our news democracy. Click the upvote icon at the top of the page to help raise this article through the indy100 rankings.

1970-01-01 08:00

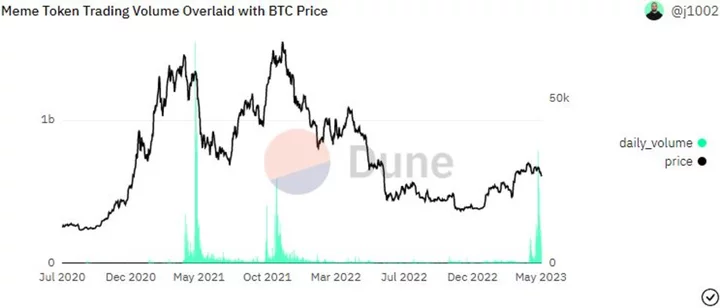

Bitcoin Bulls Trip on a Frog as Pepe Memecoin Frenzy Signals Market Top

A frog-themed digital token that’s only been around for a month may be signaling pain ahead for Bitcoin

1970-01-01 08:00

Cash App founder Bob Lee had affair with suspected killer’s sister within secret party scene, report says

When Bob Lee, a well-known tech executive who co-founded the payment programme Cash App, was stabbed to death in April, many within San Francisco’s close-knit tech community lept to conclusions, with figures like Elon Musk declaring the death another sign of the city’s persistent, if often misunderstood, struggles with random street crime. What actually happened, according to prosecutors and friends of Lee, couldn’t be further from this original narrative. Lee was part of an underground party scene in San Francisco known among participants as “The Lifestyle,” where recreational drugs and casual sex were common, participants and those who knew Lee told The Wall Street Journal. One of the people Lee overlapped with within San Francisco nightlife was Khazar Momeni, sister of Nima Momeni, the man arrested in April for Lee’s murder. He plans to plead not guilty. Lee and Ms Momeni, who is married, were reportedly in a casual relationship. “There are many rumors circulating around this case, many of them untrue,” lawyers for Ms Momeni told the Journal. “Ms. Momeni loves and supports her brother. What happened here is a tragedy, and Ms. Momeni is deeply saddened at the suffering of the Lee family as they deal with their terrible loss.” In the hours before Lee was killed, Mr Momeni confronted Lee about his sister, prosecutors allege, asking if she had done anything inappropriate, which he denied. Later, according to officials, Khazar Momeni sent Lee a text message acknowledging the confrontation: “Just wanted to make sure your doing ok Cause know nima came wayyyyyy down hard on you.” Hours after the alleged confrontation, Lee was seen getting into a white BMW with Mr Momeni, and prosecutors allege he drove the tech executive to a secluded area and stabbed him to death with a kitchen knife. The Independent has contacted Mr Momeni’s lawyer for comment. Lee had been using cocaine and ketamine before his death, an autopsy found. Mr Momeni, an IT executive, will be arraigned later his month. Read More Autopsy: Stab wounds to heart, lungs killed Cash App founder Man accused of stabbing Cash App founder gets new court date A tech CEO has been murdered and Elon Musk blames San Francisco’s ‘horrific’ rise in crime. Is he right?

1970-01-01 08:00