Scientists release findings from major study into internet and mental health – with surprising conclusion

There is no clear link between mobile phones and the internet and a negative impact on mental wellbeing, the authors of a major new study have found. Researchers took data on two million people aged between 15 and 89, from 168 countries. While they found that negative and positive experiences had both increased, they found little evidence that was the result of the prevalence of the internet. The results from the major study, led by the Oxford Internet Institute, contradict widespread speculation that the internet – and especially its widespread availability through mobile devices – has damaged mental wellbeing. The researchers said that if the link between internet use and poor health were as universal and robust as many think, they would have found it. However, the study did not look at social media use, and although the data included some young people, the researchers did not analyse how long people spent online. Professor Andrew Przybylski, of the Oxford Internet Institute and Assistant Professor Matti Vuorre, Tilburg University and Research Associate, Oxford Internet Institute, carried out the research into home and mobile broadband use. Prof Przybylski, said: “We looked very hard for a ‘smoking gun’ linking technology and wellbeing and we didn’t find it.” He added: “The popular idea that the internet and mobile phones have a blanket negative effect on wellbeing and mental health is not likely to be accurate. “It is indeed possible that there are smaller and more important things going on, but any sweeping claims about the negative impact of the internet globally should be treated with a very high level of scepticism.” Looking at the results by age group and gender did not reveal any specific patterns among internet users, including women and young girls. Instead, the study, which looked at data for the past two decades, found that for the average country, life satisfaction increased more for females over the period. Data from the United Kingdom was included in the study, but the researchers say there was nothing distinctive about the UK compared with other countries. Although the study included a lot of information, the researchers say technology companies need to provide more data, if there is to be conclusive evidence of the impacts of internetuse. They explain: “Research on the effects of internet technologies is stalled because the data most urgently needed are collected and held behind closed doors by technology companies and online platforms. “It is crucial to study, in more detail and with more transparency from all stakeholders, data on individual adoption of and engagement with internet-based technologies. “These data exist and are continuously analysed by global technology firms for marketing and product improvement but unfortunately are not accessible for independent research.” For the study, published in the Clinical Psychological Science journal, the researchers looked at data on wellbeing and mental health against a country’s internet users and mobile broadband subscriptions and use, to see if internet adoption predicted psychological wellbeing. In the second study they used data on rates of anxiety, depression and self-harm from 2000-2019 in some 200 countries. Wellbeing was assessed using data from face-to-face and phone surveys by local interviewers, and mental health was assessed using statistical estimates of depressive disorders, anxiety disorders and self-harm in some 200 countries from 2000 to 2019. Read More Software firm Cloudsmith announces £8.8m investment No ‘smoking gun’ linking mental health harm and the internet – study Young people the biggest users of generative AI, Ofcom study shows Software firm Cloudsmith announces £8.8m investment No ‘smoking gun’ linking mental health harm and the internet – study Young people the biggest users of generative AI, Ofcom study shows

2023-11-28 08:01

Software firm Cloudsmith announces £8.8m investment

A Belfast-based software supply chain management firm has announced an £8.8m investment. Cloudsmith will use the funding to grow operations for its global client base, including leading software companies such as Shopify, PagerDuty, Font Awesome, HP and EnterpriseDB. The funding, led by MMC Ventures, will bolster the firm’s ability to deliver a software supply chain platform. Cloudsmith provides organisations with a single source for managing all their software assets, including datasets required to build the AI products of the future. Recently appointed chief executive officer Glenn Weinstein said the industry demand for software supply chain solutions is surging. He said: “Despite economic headwinds and a slow venture capital funding market, this announcement reaffirms the confidence our investors have in Cloudsmith. “We’ve been successfully disrupting and reinventing the software supply chain market. “This fresh infusion of capital also comes as industry demand for secure and reliable software supply chain solutions is surging. “Cybersecurity attacks of increasing severity have become more frequent, and threaten reputational damage, data exfiltration and IP theft.” The firm’s software supply chain management platform is designed to meet the needs of software teams building for internal use or distributing software packages to the market. It provides a suite of artefact storage, management and distribution solutions, allowing developers and companies to streamline and control their software supply chain, improve collaboration and accelerate product delivery. Belfast is a leading tech hub with a thriving digital economy Glenn Weinstein Mr Weinstein added: “This funding will be used to enhance Cloudsmith’s unique cloud-native software supply chain solution, which is faster, more secure and of higher value than the legacy on-premises vendors we’re displacing. “Cloudsmith is a great choice for companies with software teams distributed in remote locations, and while the US is our largest market, we continue to see increased demand from a range of countries including the UK, Germany and Australia.” He emphasised the strategic importance of its Belfast headquarters which benefits from access to both UK and EU markets. “Belfast is a leading tech hub with a thriving digital economy. “We see this renewed round of investment as a doubling down on Cloudsmith’s commitment to this vibrant city.” Read More Young people the biggest users of generative AI, Ofcom study shows No ‘smoking gun’ linking mental health harm and the internet – study UK and South Korea issue warning over North Korea-linked cyber attacks Data protection watchdog warns websites over cookie consent alerts Employee data leaked during British Library cyber attack Half of adults who chat online with strangers do not check age – poll

2023-11-28 08:01

Investors Rethink Stock Bets Ahead of Sweeping New Carbon Laws

The price of carbon is going up. While no one knows exactly how high it will go or

2023-11-28 04:00

Rivian launches leasing for R1T electric pickup truck in some US states

Rivian Automotive on Monday announced the launch of leasing for its R1T electric pickup truck for customers in

2023-11-28 03:56

More US shoppers tack on buy now, pay later debt for Cyber Monday

By Arriana McLymore and Deborah Mary Sophia NEW YORK A record amount of price-pinched holiday shoppers are expected

2023-11-28 02:56

UK, US and other governments release rules to stop AI being hijacked by rogue actors

The UK, US and other governments have released plans they hope will stop artificial intelligence being hijacked by rogue actors. The major agreement – hailed as the first of its kind – represents an attempt to codify rules that will keep AI safe and ensure that systems are built to be secure by design. In a 20-page document unveiled Sunday, the 18 countries agreed that companies designing and using AI need to develop and deploy it in a way that keeps customers and the wider public safe from misuse. The agreement is non-binding and carries mostly general recommendations such as monitoring AI systems for abuse, protecting data from tampering and vetting software suppliers. Still, the director of the U.S. Cybersecurity and Infrastructure Security Agency, Jen Easterly, said it was important that so many countries put their names to the idea that AI systems needed to put safety first. “This is the first time that we have seen an affirmation that these capabilities should not just be about cool features and how quickly we can get them to market or how we can compete to drive down costs,” Easterly told Reuters, saying the guidelines represent “an agreement that the most important thing that needs to be done at the design phase is security.” The agreement is the latest in a series of initiatives - few of which carry teeth - by governments around the world to shape the development of AI, whose weight is increasingly being felt in industry and society at large. In addition to the United States and Britain, the 18 countries that signed on to the new guidelines include Germany, Italy, the Czech Republic, Estonia, Poland, Australia, Chile, Israel, Nigeria and Singapore. The framework deals with questions of how to keep AI technology from being hijacked by hackers and includes recommendations such as only releasing models after appropriate security testing. It does not tackle thorny questions around the appropriate uses of AI, or how the data that feeds these models is gathered. The rise of AI has fed a host of concerns, including the fear that it could be used to disrupt the democratic process, turbocharge fraud, or lead to dramatic job loss, among other harms. Europe is ahead of the United States on regulations around AI, with lawmakers there drafting AI rules. France, Germany and Italy also recently reached an agreement on how artificia lintelligence should be regulated that supports “mandatory self-regulation through codes of conduct” for so-called foundation models of AI, which are designed to produce a broad range of outputs. The Biden administration has been pressing lawmakers for AI regulation, but a polarized U.S. Congress has made little headway in passing effective regulation. The White House sought to reduce AI risks to consumers, workers, and minority groups while bolstering national security with a new executive order in October. Additional reporting by Reuters Read More Putin targets AI as latest battleground with West AI breakthrough could help us build solar panels out of ‘miracle material’ OpenAI co-founder Sam Altman ousted as CEO YouTube reveals bizarre AI music experiments AI-generated faces are starting to look more real than actual ones Children are making indecent images using AI image generators, experts warn

2023-11-28 02:20

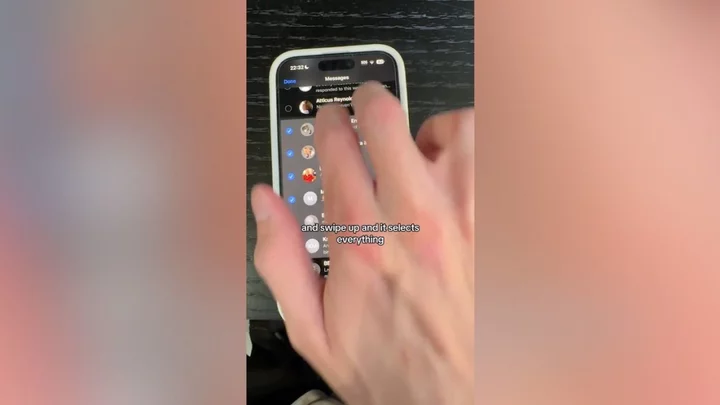

Ex-Apple employee reveals game-changing iPhone hacks everyone should know

A former Apple employee has been sharing some of the handy iPhone hacks he learnt while working at the tech giant - and we can't believe we didn't know them before. From tips as simple as holding your camera button down to record a video instead of swiping, to switching to a 'one-handed keyboard' to save your muscles aching, Tyler Morgan has completely changed the way his followers are using their phones. Arguably one of the most popular he recommended is that you can actually do voiceovers while screen recording, by swiping down to reveal a microphone button. Sign up to our new free Indy100 weekly newsletter

2023-11-28 00:27

Children are making indecent images using AI image generators, experts warn

Schoolchildren are using artificial intelligence systems to generate indecent images of other kids, experts have warned. The UK’s Safer Internet Centre, or UKSIC, said that schools had reported children trying to make indecent images of their fellow pupils with online AI image generators. The images themselves constitute child sexual abuse material and generating and sharing them could be a crime. But it could also have a drastically harmful impact on other children, or be used to blackmail them, experts warn. Some AI systems include safeguards specifically intended to stop them being used to generate adult images. But others do not, and what safeguards there are may be bypassed in some cases. UKSIC has urged schools to ensure that their filtering and monitoring systems were able to effectively block illegal material across school devices in an effort to combat the rise of such activity. David Wright, UKSIC director, said: “We are now getting reports from schools of children using this technology to make, and attempt to make, indecent images of other children. “This technology has enormous potential for good, but the reports we are seeing should not come as a surprise. “Young people are not always aware of the seriousness of what they are doing, yet these types of harmful behaviours should be anticipated when new technologies, like AI generators, become more accessible to the public. “We clearly saw how prevalent sexual harassment and online sexual abuse was from the Ofsted review in 2021, and this was a time before generative AI technologies. “Although the case numbers are currently small, we are in the foothills and need to see steps being taken now, before schools become overwhelmed and the problem grows. “An increase in criminal content being made in schools is something we never want to see, and interventions must be made urgently to prevent this from spreading further. “We encourage schools to review their filtering and monitoring systems and reach out for support when dealing with incidents and safeguarding matters.” In October, the Internet Watch Foundation (IWF), which forms part of UKSIC, warned that AI-generated images of child sexual abuse are now so realistic that many would be indistinguishable from real imagery, even to trained analysts. The IWF said it had discovered thousands of such images online. Artificial intelligence has increasing become an area of focus in the online safety debate over the last year, in particular, since the launch of generative AI chatbot ChatGPT last November, with many online safety groups, governments and industry experts calling for greater regulation of the sector because of fears it is developing faster than authorities are able to respond to it. Additional reporting by Press Association Read More Bizarre bumps are appearing on Google’s latest smartphone Putin targets AI as latest battleground with West Nasa has received a signal from 10 million miles away Bizarre bumps are appearing on Google’s latest smartphone Putin targets AI as latest battleground with West Nasa has received a signal from 10 million miles away

2023-11-28 00:18

Storm May Dump a Foot of Snow in Western New York: Weather Watch

A cold air front crossing Lakes Erie and Ontario could drop 12 to 18 inches (30.5 to 45.7

2023-11-27 22:01

Germany Approves Revised 2023 Budget Suspending Borrowing Limit

German Chancellor Olaf Scholz’s government approved a supplementary 2023 budget that includes the suspension of rules limiting net

2023-11-27 21:59

L3Harris to sell its commercial aviation solutions business for $800 million

L3Harris Technologies is selling its commercial aviation solutions business to private equity firm TJC L.P. for $800 million,

2023-11-27 21:57

Google’s latest smartphone has bizarre bumps on the screen

Owners of Google’s latest premium smartphone are experiencing strange bumps and ripples that appear on the device’s screen. Google claims the issue with the Google Pixel 8 Pro has “no functional impact to Pixel 8 performance or durability”, though some users have already returned their new phone in an effort to resolve it. Pixel owners shared their experiences with the issue across social media and on Google forums, expressing their frustration that there appears to be no fix. “I had this on mine as well,” a user called Constanza Juarez wrote. “Not visible on natural light but extremely visible under artificial light, both with screen on and/or off.” Another user wrote: “Even with a glass screen protector, I can see the same bumps when I examine the edges of my Pixel 8 Pro.” Bumps and ripples have been reported on the top and bottom left of the screen, above the SIM card tray, near the fingerprint scanner, as well as the top and bottom right of the display. Some even reported sending their bumpy phones back to Google, only to have the same issue occur with the replacement device. A video showing the Google Pixel 8 Pro being taken apart suggests that the internal mechanics of the smartphone are responsible for the screen bumps, which could complicate any attempts by the phone maker to rectify the issue on this particular model. “Notice how the spring clips in the right side of the Pixel 8 Pro line up exactly with the indents in the foil on the display side of the phone,” one owner noted. “It seems to be pretty clear that these clips are the cause of the bumps we are seeing in our displays.” Google has not revealed the exact internal phone part causing the uneven surface, however did acknowledge that some users may see them on their new smartphones. “Pixel 8 phones have a new display,” a company spokesperson said. “When the screen is turned off, not in use and in specific lighting conditions, some users may see impressions from components in the device that look like small bumps. There is no functional impact to Pixel 8 performance or durability.” Read More Google issues one-week deadline to Gmail account holders Gmail users receive urgent warning before account purge Don’t believe your eyes: how tech is changing photography forever Gmail users receive urgent warning before account purge

2023-11-27 20:17

You Might Like...

Half of adults who chat online with strangers do not check age – poll

EU Green Goals Set to Cost Romania $356 Billion

Semtech Releases New Surge Protection Product to Safeguard Electronics

Former Meta employee tells Senate company failed to protect teens safety

Russians Appeared to Seek Refuge in Crypto During Wagner Revolt

China to Curb Graphite Exports. What It Means for Tesla and Other EV Stocks.

Scientists have located a legendary Egyptian city that never appeared on maps

University of Phoenix announces 2023 Faculty of the Year Award recipients